Introduction: Welcome to the Age of Engineered Reality

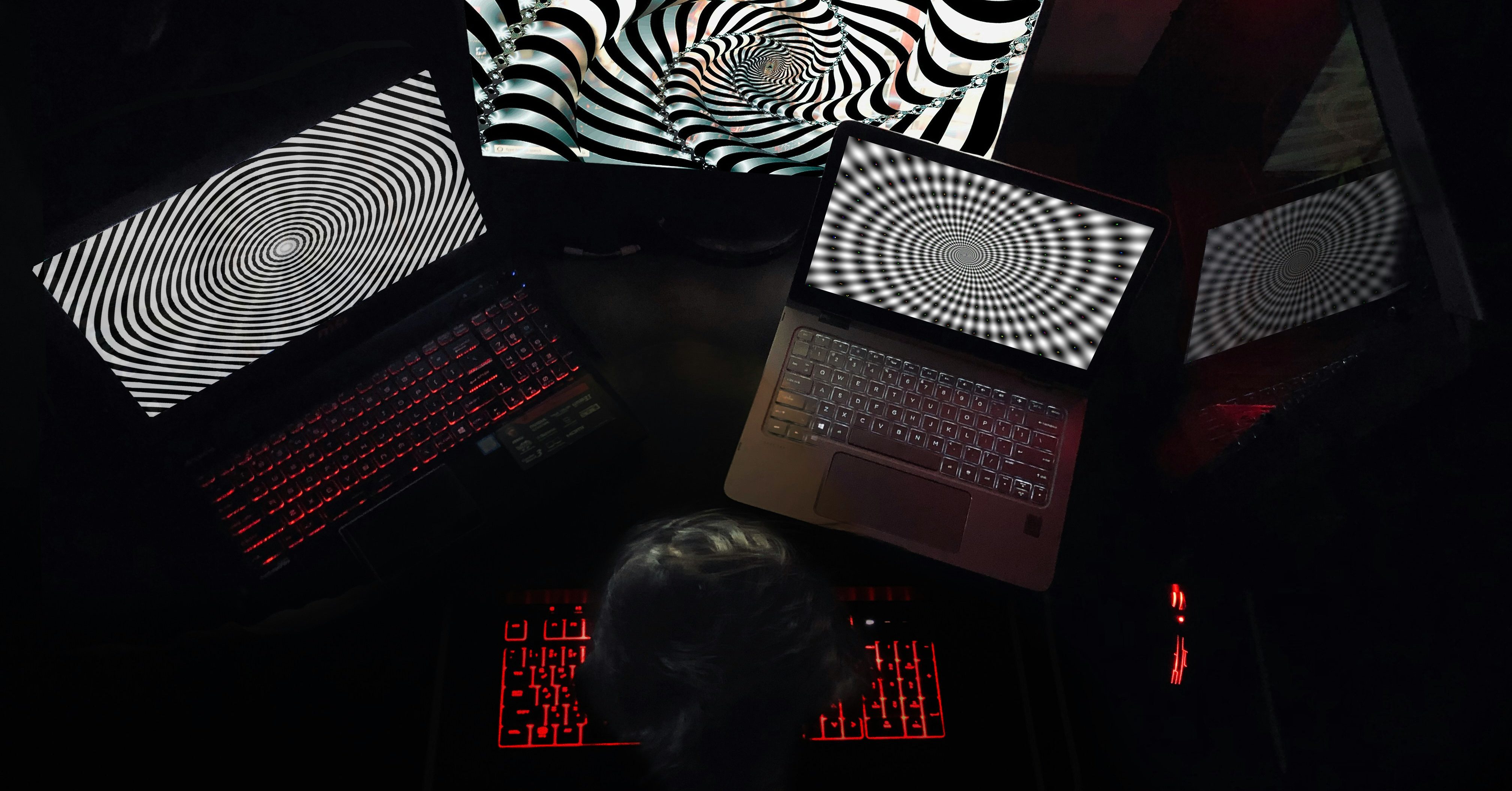

We live in a time when truth can be tailored, trust is fragile, and narratives spread faster than facts. The digital revolution promised access, freedom, and empowerment, but it also opened a Pandora’s box of manipulation. Today, public perception isn’t just influenced by the news we consume. It’s engineered: deliberately shaped by algorithms, influencers, bots, memes, and hidden agendas.

Digital propaganda is not the heavy-handed state-controlled media of the 20th century. It’s slicker, smarter, and often invisible. It’s embedded in viral tweets, TikTok trends, comment sections, YouTube thumbnails, and “relatable” Instagram reels. It doesn’t tell you what to think, it nudges you toward a conclusion, creating the illusion that you arrived there independently.

Understanding how this works is essential not only for digital literacy but also for the health of democracy itself.

What Is Digital Propaganda?

Propaganda is the strategic dissemination of information, often misleading, to influence opinions or behavior. In the digital realm, it becomes amplified, personalized, and interactive.

Unlike traditional propaganda, which relied on mass broadcasting, digital propaganda operates through micro-targeting and emotional resonance. It blends fact and fiction, leveraging technology to manipulate perception at scale.

Common forms include:

- Disinformation campaigns (deliberate falsehoods)

- Astroturfing (fake grassroots movements)

- Sockpuppet accounts (multiple online identities run by one person)

- Bots and trolls (automated or hired agents of influence)

- Memetic warfare (weaponizing memes for ideological impact)

The goal is rarely to inform. It’s to persuade, divide, confuse, or mobilize, often without you realizing it.

The Mechanics: How Perception Is Engineered

1. Algorithmic Amplification

Social media platforms run on engagement. Their algorithms reward content that triggers strong emotional reactions, anger, fear, outrage, and joy. This creates a fertile environment for propaganda.

Once an emotionally charged post starts gaining traction, algorithms amplify it further, creating echo chambers where certain views dominate and opposing perspectives are buried.

Example: During the COVID-19 pandemic, anti-vaccine misinformation surged on platforms like Facebook and TikTok, reaching millions before fact-checkers could respond.

2. Microtargeting

Using data from cookies, browsing history, and app activity, political campaigns and foreign actors can deliver hyper-specific messages to users based on age, gender, location, beliefs, and vulnerabilities.

This is what made Cambridge Analytica infamous during the 2016 U.S. election. Voters received personalized propaganda tailored to their fears and biases—not from candidates directly, but from shadowy digital operators.

3. The Illusion of Consensus

When dozens of accounts repeat the same message, it creates the perception of popularity, a psychological phenomenon called “social proof.” People tend to believe what others seem to believe.

Bot armies and coordinated networks inflate content artificially. The result? Fringe ideas appear mainstream. Lies start to feel like the truth.

Case Study: The Russian Internet Research Agency used thousands of fake Twitter and Facebook accounts to influence U.S. voters, posing as Americans and engaging in polarizing conversations on immigration, race, and gun control.

The Role of Influencers, Memes, and Virality

Influencers, especially those on TikTok, YouTube, and Instagram, have become powerful tools in perception engineering. With parasocial relationships (one-sided emotional connections), audiences often trust influencers more than journalists or institutions.

Soft propaganda spreads through:

- Branded content disguised as lifestyle advice

- Political TikToks masked as satire

- Conspiracy theories presented as “just asking questions”

- Hashtag movements with hidden funding

Meanwhile, memes, though humorous, are potent propaganda vectors. They compress complex narratives into digestible visuals, often with irony or sarcasm, making them both viral and sticky.

Example: QAnon’s rise was fueled in part by meme culture, coded language, and gamification tactics that recruited followers across platforms.

Case Studies: When Digital Propaganda Shaped Reality

1. Brexit and Facebook Dark Ads

In the lead-up to the 2016 Brexit vote, the Leave campaign reportedly used dark ads: untraceable, personalized Facebook messages, to target undecided voters. These ads stoked fears around immigration and national identity, manipulating public opinion without public scrutiny.

2. Myanmar and Facebook’s Role in Genocide

In Myanmar, Facebook was the primary source of news for millions. Military officials used fake accounts to spread hate speech against the Rohingya Muslim minority, contributing to what the UN called a “textbook example of ethnic cleansing.”

3. Ukraine and Information Warfare

The Russian invasion of Ukraine has been accompanied by a massive digital propaganda campaign, both externally, to justify aggression, and internally, to suppress dissent. Meanwhile, Ukraine has used digital media to rally global support and combat misinformation, showing how both sides deploy information as a weapon.

Platforms as Propaganda Machines

Social media companies claim neutrality, but their algorithms, policies, and ad systems often enable manipulation.

Facebook / Meta

- Has been repeatedly criticized for spreading misinformation and enabling political manipulation.

- Internal studies showed that its platform “exploits the human brain’s attraction to divisiveness.”

TikTok

- Rising fast as a news source for Gen Z, but lacks transparency about its algorithm.

- Accused of censoring content critical of authoritarian governments, particularly China.

YouTube

- Recommendations often spiral users toward extremism (“rabbit holes”).

- Monetization incentivizes sensational content, even if it’s false or harmful.

X (formerly Twitter)

- Despite efforts to curb bots and disinformation, coordinated influence campaigns persist.

- Elon Musk’s ownership has complicated trust, verification, and content moderation policies.

The Psychological Toolkit of Digital Propaganda

To manipulate perception effectively, digital propagandists exploit key psychological mechanisms:

- Confirmation bias: People accept information that supports their beliefs and reject what doesn’t.

- Fear appeals: Content that stokes fear spreads faster and influences behavior.

- Repetition: The “illusory truth effect” means that repeated lies start to feel true.

- Tribalism: Propaganda often appeals to group identity, creating “us vs. them” mentalities.

- Scarcity and urgency: “You must act now” messages bypass critical thinking.

By bypassing rational analysis and appealing directly to emotion, digital propaganda bypasses logic and implants belief.

Digital Propaganda and Democracy

The stakes are high. When public perception is manipulated at scale, democracy erodes. Misinformation undermines trust in elections, institutions, journalism, and even basic reality.

Examples of democratic erosion include:

- Elections swayed by disinformation

- Health crises exacerbated by conspiracy theories

- Public protests are ignited or suppressed through online manipulation

- Polarization weaponized to weaken civic discourse

As philosopher Hannah Arendt warned, when people can no longer agree on what is true, they cannot make rational political decisions. That’s when authoritarianism thrives.

Who’s Behind the Curtain? The Players in Digital Propaganda

State Actors

Countries like Russia, China, Iran, and North Korea actively run propaganda networks to influence global opinion, sway elections, and destabilize rivals.

Private Firms

Companies like Cambridge Analytica showed how data-driven psychological profiling can be weaponized for political gain.

Influencers and Content Creators

Some participate knowingly; others are useful idiots, spreading propaganda unwittingly because it aligns with their worldview or earns engagement.

Ordinary Users

We’re all part of the system. Sharing unverified memes or inflammatory posts helps fuel the machine.

How to Protect Yourself (and Others)

Fighting digital propaganda doesn’t mean unplugging completely; it means engaging critically and responsibly.

1. Verify Before You Share

- Use fact-checking platforms like Snopes, PolitiFact, or Reuters Fact Check.

- Cross-reference sources before reposting “breaking news.”

2. Recognize Emotional Manipulation

- Does a post make you feel angry, afraid, or smug? That’s often the point. Pause before reacting.

3. Diversify Your Media Diet

- Follow a variety of outlets, both domestic and international.

- Seek out opposing viewpoints to avoid echo chambers.

4. Use Tools That Detect Misinformation

- Browser extensions like NewsGuard, Bot Sentinel, and Hoaxy help assess credibility and detect bots.

5. Call Out Disinformation With Tact

- Don’t shame others. Politely ask for sources or present counter-evidence to avoid defensiveness.

The Future of Truth: Can We Reclaim Reality?

The battle over perception is also a battle over the future. As AI-generated deepfakes, synthetic voices, and autonomous botnets grow more sophisticated, the line between real and fake is disappearing.

But so are our excuses.

Media literacy, public education, responsible tech development, and regulation must all evolve alongside the tools of manipulation. Platforms must be held accountable, not just for profit margins, but for their role in shaping the public sphere.

Ultimately, perception shapes behavior, and behavior shapes history. If we lose control of how we form beliefs, we lose control of democracy itself.

Conclusion: Behind Every Click, a Choice

Every time we click, post, like, or share, we participate in the shaping of public consciousness. In the digital age, perception is the battlefield, and our minds are the terrain.

Understanding how propaganda works isn’t just a skill; it’s a civic responsibility. In a world where information is abundant but truth is contested, vigilance is our best defense.

The question isn’t whether propaganda exists online. It’s whether we recognize it, question it, and resist becoming vessels for its spread.

Because when perception is engineered, freedom is not far behind.

References

Stanford Internet Observatory. (2023). “Detecting Digital Propaganda Campaigns.”

https://cyber.fsi.stanford.edu/io/news/digital-propaganda

The Guardian. (2018). “Cambridge Analytica, Facebook and the Data Scandal.”

https://www.theguardian.com/news/series/cambridge-analytica-files

Pew Research Center. (2022). “Social Media and the Spiral of Silence.”

https://www.pewresearch.org/internet/2022/06/13/social-media-and-politics

MIT Media Lab. (2018). “The Spread of True and False News Online.”

https://www.science.org/doi/10.1126/science.aap9559

UN Human Rights Council. (2018). “Report on Facebook’s Role in Myanmar.”

https://www.ohchr.org/en/press-releases/2018/08

Brookings Institution. (2023). “Information Warfare in Ukraine.”

https://www.brookings.edu/articles/ukraine-and-the-battle-for-truth

Center for Humane Technology. (2024). “The Misinformation Machine.”

https://www.humanetech.com

Olivia Santoro is a writer and communications creative focused on media, digital culture, and social impact, particularly where communication intersects with society. She’s passionate about exploring how technology, storytelling, and social platforms shape public perception and drive meaningful change. Olivia also writes on sustainability in fashion, emerging trends in entertainment, and stories that reflect Gen Z voices in today’s fast-changing world.

Connect with her here: https://www.linkedin.com/in/olivia-santoro-1b1b02255/